The AICOM project aims to democratize AI research by transforming the collection of training data for gaze estimation into an enjoyable game. Participants play cooperatively, using their eye gaze to communicate words while their face images are captured. The project received positive feedback during a demonstration experiment, engaging diverse people and fostering discussions about gaze estimation AI and AI applications. The project aims to extend its approach to other AI research areas, making AI research accessible to a broader audience and incorporating diverse perspectives.

AI has been gaining increasing attention in recent times. Its applications and potential have captured the imagination of various industries and researchers. However, this expansion of AI technology also brings concerns about AI systems' black-box nature. As AI becomes more large-scale and complex, it becomes harder for humans to imagine and understand how it is made. This lack of transparency raises ethical questions and could result in unintended consequences. The backbone of machine learning models is the training data they rely on. It is also a critical issue that such training datasets can carry data biases, leading to unfair or discriminatory outcomes. Addressing these challenges requires democratizing AI research and development, making it accessible to not just experts but also the general public. The critical question is how we can involve people in AI research; this is where the AICOM project comes into play.

As a collaboration between the UTokyo-IIS' Design-Led X Platform (DLX Design Lab) and Yusuke Sugano lab, the AICOM project aims to engage a diverse audience in AI research. By transforming the process of collecting training data for machine learning-based gaze estimation into an enjoyable game, we expect people from various backgrounds can participate and contribute to the research process.

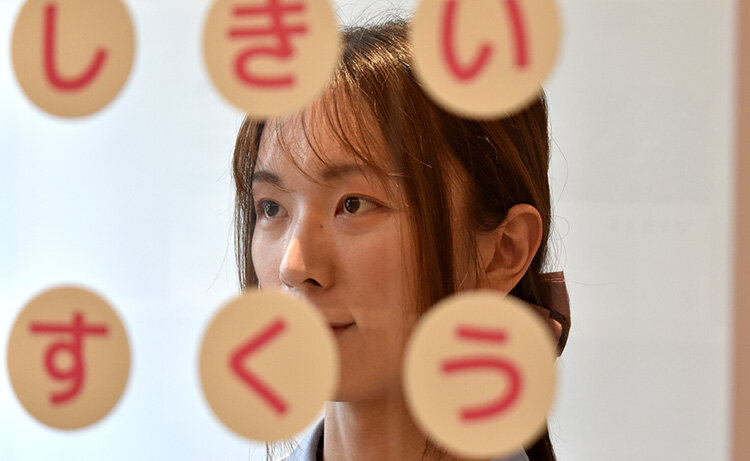

Gaze estimation is a technique for inferring where a person is looking. Sugano lab has been developing machine learning-based gaze estimation techniques to perform gaze estimation using only ordinary cameras. Many face images with the correct annotation of gaze direction are required to train such gaze estimation models. The AICOM game is designed for two participants to play cooperatively, and the objective is to use eye gaze to communicate words to the other player. The player's face image is captured while looking at the letters on the transparent letter board, and a face image dataset associated with ground-truth gaze directions is acquired through the game.

From July 3-7, a demonstration experiment for the AICOM project took place at the IIS' Dining Lab, a dining facility where cutting-edge research is conducted to shape tomorrow's lifestyles.

The game system was installed at the Dining Lab, and a diverse group of people, including visitors to the facility, could experience the game and contribute to the data collection procedure. The response from the participants was positive, with everyone enjoying the game and showing interest in the research of gaze estimation AI. A small workshop was also held during the experiment, where participants engaged in lively discussions about gaze estimation techniques and AI applications.

The AICOM project aspires to expand its approach to other areas of AI research. By involving diverse people in diverse AI topics through physical and enjoyable experiences, the project aims to open the process of AI research to a broader audience and to develop AI based on various perspectives.